What is a Data Pipeline? A Beginner-Friendly Guide

If you’re wondering what a data pipeline is, how it works, and why it matters, this guide is for you. Whether you're a data engineer, a marketer, or an executive, understanding how data pipelines power modern business is crucial.

Definition: A data pipeline is a system that moves data from one or more sources to one or more destinations—often transforming it along the way.

By the end of this article, you’ll understand:

- What a data pipeline is (with real-world analogies)

- Why data pipelines are essential to business operations

- The difference between batch and real-time pipelines

- Where tools like Estuary fit into modern data architecture

Whether you’re just data-curious or looking to improve your company’s data stack, you’re in the right place.

Who Needs to Understand Data Pipelines?

Data pipelines are often thought of as purely technical, but they impact far more people than just engineers.

In reality, a wide range of stakeholders across your organization rely on data pipelines, even if they don’t realize it. Understanding what they are and how they work can improve collaboration, decision-making, and data governance.

Common Stakeholders in a Data Pipeline:

- Data Engineers

The architects and mechanics of your data infrastructure. They design, build, and maintain pipelines to move and transform data. - Software Engineers & IT Teams

While not always focused on pipelines directly, these professionals support the systems that pipelines run on—ensuring uptime, scalability, and performance. - Data Analysts & Data Scientists

These teams rely on pipelines to deliver clean, timely data for analysis, modeling, and business intelligence. - Business Leaders & Executives

Leaders increasingly rely on data to make strategic decisions. A basic understanding of pipeline workflows helps them ask better questions and manage risk. - Marketing, Sales, and Ops Professionals

The “silent majority” of data users—these roles depend on dashboards, CRMs, and campaign tools fed by pipelines, even if they never see the backend.

“Even if you’ve never touched a database, data pipelines quietly power the insights and tools you rely on every day.”

Whether you’re a practitioner or decision-maker, knowing the basics of how data flows through your organization can help you catch issues faster, collaborate more effectively, and contribute to a healthier data culture.

What is a Data Pipeline?

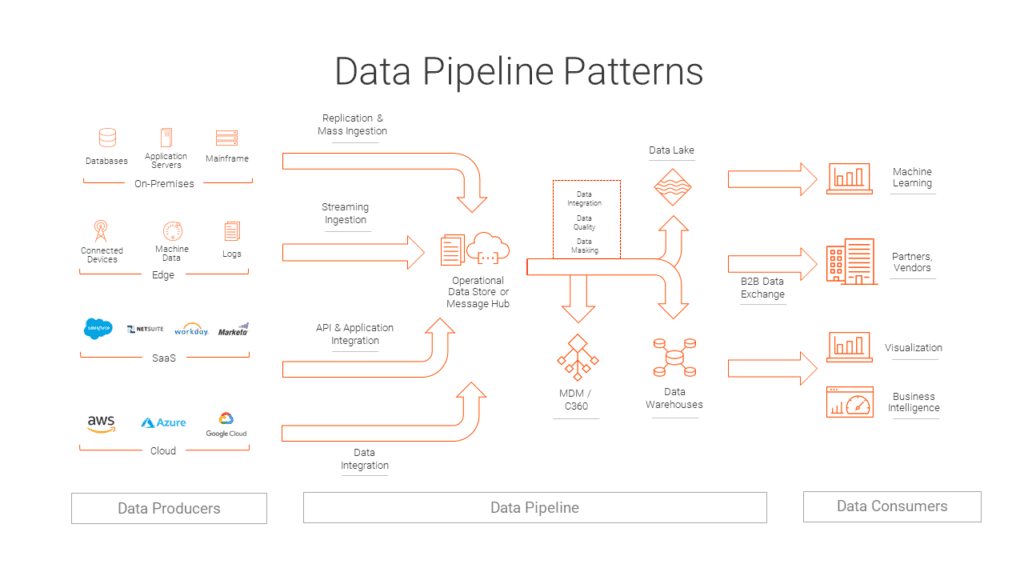

A data pipeline is a system that moves data from one or more source systems to one or more destination systems, often transforming it along the way.

Definition: A data pipeline is like plumbing for your organization's information—it ensures that clean, organized data flows from where it originates to where it's needed.

Why is it called a pipeline?

Imagine a water pipe that pulls clean water from a reservoir and delivers it to your home. A data pipeline works the same way. It:

- Extracts data from a source (like Salesforce or MySQL),

- Transforms it if needed (cleaning, reshaping, formatting),

- Delivers it to a destination like Snowflake or a dashboard.

And just like plumbing, when it's working right, you don't even notice it. But when it's broken, everything else grinds to a halt.

Common Components:

- Sources: CRMs, databases, APIs, cloud storage

- Pipeline engine: The middleware layer, often powered by platforms like Estuary

- Destinations: Data warehouses, real-time dashboards, SaaS tools

These systems are often part of broader terms you might’ve heard:

- ETL (Extract, Transform, Load)

- ELT (Extract, Load, Transform)

- Data ingestion or data integration

- Streaming data pipelines

As long as data moves from Point A to Point B—especially with scale or automation involved—it’s a pipeline.

Why Do Data Pipelines Matter?

Modern businesses run on data. But without a way to reliably move data across systems, all that information becomes siloed, stale, or unusable.

That’s where data pipelines come in.

Data pipelines enable organizations to deliver the right data, to the right place, at the right time—automatically.

From fraud Detection to marketing analytics, nearly every team in your company depends on a working pipeline—even if they never touch the backend.

Common Business Use Cases for Data Pipelines

Fraud Detection

Financial institutions and eCommerce platforms use real-time pipelines to flag suspicious transactions instantly, minimizing risk and loss.

Inventory Management

Retail and logistics companies use pipelines to sync inventory across warehouses, ERPs, and fulfillment systems in real time.

Customer Personalization

Data from web traffic, shopping behavior, and CRMs can be piped into a central source to power dynamic recommendations and personalized experiences.

Campaign & Attribution Analytics

Marketing teams use pipelines to pull fresh data from platforms like Meta Ads, Google Ads, and Shopify into dashboards for near-instant reporting and ROI analysis.

Product Feedback Loops

SaaS companies collect product usage data to monitor adoption and performance, enabling faster iteration cycles and better user experiences.

Without pipelines, these workflows would either be impossible or painfully manual and error-prone.

As data volumes and velocity grow, pipelines ensure your business can scale without sacrificing speed, accuracy, or insight.

How Do Data Pipelines Work?

At a high level, most data pipelines follow a simple flow: Capture → Transform → Deliver → Monitor.

Let’s walk through each stage with a practical lens.

1. Capture (Data Ingestion)

The pipeline begins by extracting data from one or more source systems. These could include:

- Databases like PostgreSQL or MySQL

- SaaS tools like Salesforce, Shopify, or HubSpot

- Event streams (e.g., Kafka)

- Cloud storage like AWS S3 or Google Cloud Storage

This stage is also known as data ingestion. It may happen in:

- Batches (e.g., once daily)

- Real-time (via change data capture or event-driven sync)

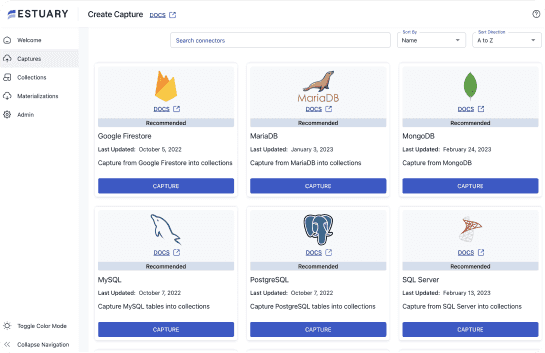

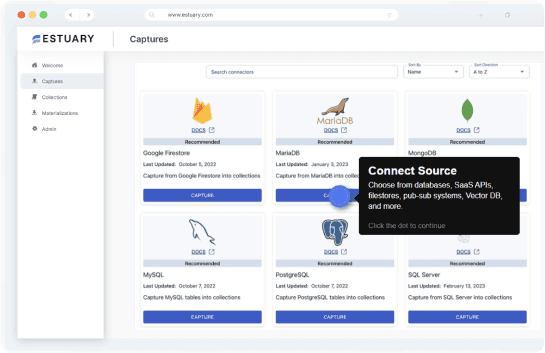

Platforms like Estuary support both batch and real-time ingestion across many popular sources.

2. Transformation

Before data is sent to its destination, it’s typically cleaned, normalized, or enriched. This step ensures consistency and usability.

Common transformations include:

- Removing duplicates

- Converting date formats

- Aggregating or joining tables

- Mapping fields to a predefined schema

Without transformation, your warehouse can quickly become a data swamp—a mass of unusable, inconsistent information.

3. Delivery (Loading into Destination)

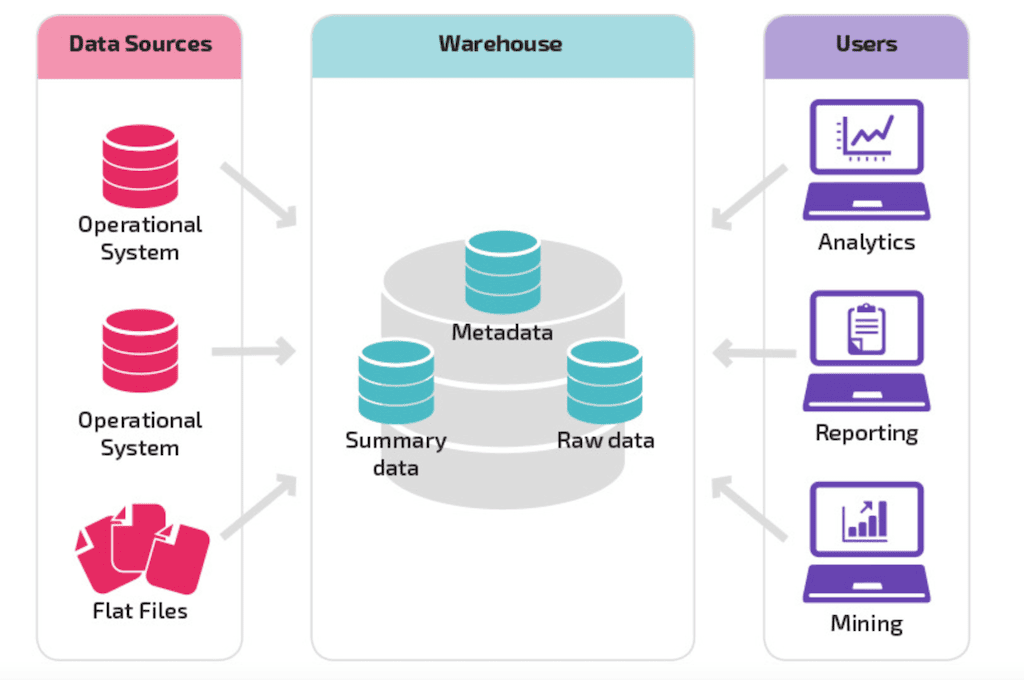

After transformation, data is delivered to its destination, such as:

- A data warehouse (e.g., Snowflake, BigQuery, Redshift)

- A data lake (e.g., Delta Lake, Iceberg)

- BI dashboards or operational tools

This is where your analytics, machine learning, and reporting workflows come alive.

4. Monitoring & Observability

Once deployed, data pipelines typically run on their own. But issues like schema drift, API failures, or connection errors can still occur.

To ensure reliability, your team should:

- Use built-in monitoring dashboards from your pipeline tool

- Leverage data observability platforms

- Set up automated tests or alerts for anomalies

A healthy pipeline is one that’s invisible when it works—and immediately obvious when it doesn’t.

Batch vs. Real-Time Data Pipelines

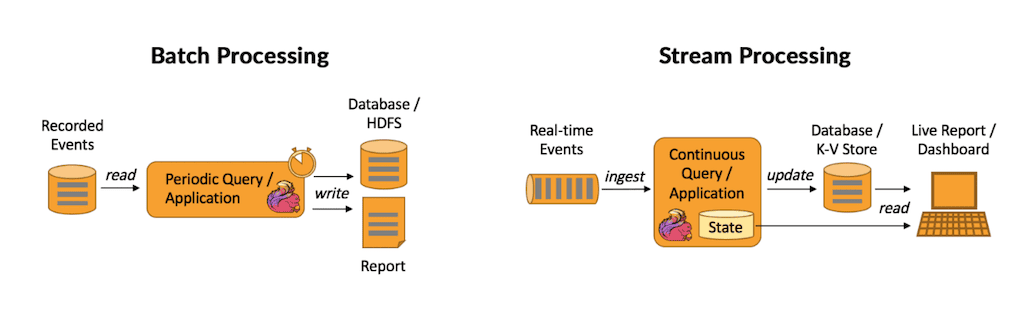

Not all data pipelines operate the same way. The two most common types are batch and real-time (streaming) pipelines. Both serve different business needs, and choosing the right one impacts cost, speed, and architecture.

Batch Pipelines

Batch pipelines move data in large chunks at scheduled intervals (e.g., hourly, nightly). They’re easier to implement and are often used for historical reporting or back-office workflows.

Batch data processing is compute-intensive because each time the process is run, the entire source system must be scanned.

Because they are so heavy on the system, historically, batch workflows were put off until periods of low activity, at night, or on weekends. This is because most businesses had their own, on-premises servers, with limited computation resources and storage capacity.

Today, most companies use cloud infrastructure, so waiting for periods of low activity isn’t as much of a concern. However, batch processing can still increase costs in cloud infrastructure, and will always introduce at least some amount of latency.

Common traits:

- Periodic scans of source systems

- High data latency (hours or more)

- Can be compute-intensive and costly in the cloud

- Often built using tools like Airflow, dbt, or custom scripts

Use case examples:

- Daily sales reports

- Monthly financial summaries

- CRM data syncing once a day

Real-Time Pipelines

Real-time pipelines move data continuously as new events happen. They’re built for agility, speed, and decision-making at the moment data is generated.

This makes real-time data pipelines not only faster than batch data pipelines, but also more cost-effective.

Common traits:

- Event-based ingestion using CDC (Change Data Capture) or streaming platforms like Kafka

- Ultra-low latency (seconds or milliseconds)

- Ideal for live analytics and operational syncs

- Often powered by cloud-native platforms like Estuary

Use case examples:

- Fraud detection on transaction events

- Live customer segmentation and personalization

- Real-time inventory updates

“Real-time pipelines don’t just move faster—they let your business respond faster.”

And while historically hard to implement, modern platforms like Estuary make real-time architecture far more accessible, removing the need to manage Kafka or write custom streaming code.

Data Pipeline Use Cases

To understand the value of data pipelines, it helps to look at how they’re applied in real-world business scenarios. Whether you're in eCommerce, finance, SaaS, or logistics, data pipelines power key workflows across your organization.

1. Operational Applications

Pipelines support event-driven applications that require fast data movement and response times.

Example use cases:

- Fraud detection systems for banking and fintech

- Inventory synchronization across multiple warehouses

- Price updates for rapidly shifting product catalogs

- Automated alerts and triggers in operations workflows

These use cases often depend on real-time pipelines to work effectively.

2. Business Intelligence & Analytics

Analytics teams depend on pipelines to move data from various sources into centralized repositories for exploration and modeling.

Example use cases:

- Marketing attribution dashboards (e.g., tracking campaign ROI)

- Revenue forecasting models

- Customer churn analysis

- Cross-channel reporting from tools like Google Ads, HubSpot, and Shopify

Without timely and reliable pipelines, teams are forced to pull stale data manually—or make decisions with partial information.

3. Data Centralization

As companies adopt more tools, data becomes scattered. Pipelines enable organizations to unify this data in a data warehouse or lakehouse architecture.

Benefits of centralization:

- A 360-degree view of the customer

- Simplified governance and compliance

- Faster decision-making across teams

Once the data is centralized, it can be used to power both analytics and reverse ETL workflows, pushing insights back into operational systems.

Conclusion: Why Every Organization Needs Data Pipelines

By now, you’ve seen that data pipelines are more than just backend infrastructure—they’re the silent engines powering critical business functions across every department.

From fraud detection to real-time dashboards, data pipelines enable faster decisions, cleaner data, and better customer experiences.

We’ve covered:

- What a data pipeline is

- The differences between batch and real-time processing

- The stages of pipeline architecture

- Real-world use cases across operations, marketing, and analytics

Even if you’re not a data engineer, understanding how data flows through your organization makes you a more effective stakeholder, collaborator, and decision-maker.

Whether you’re centralizing data, reducing reporting delays, or powering AI models, a resilient pipeline strategy is non-negotiable.

Get Started with Real-Time Pipelines — Without the Overhead

Estuary is a fully managed DataOps platform designed to make building real-time data pipelines as easy as setting up a form.

- No need to manage Kafka or Airflow

- Built-in schema enforcement and transformations

- Support for streaming and batch ingestion

- Dozens of prebuilt connectors for databases, SaaS tools, warehouses, and apps

👉 Start building your real-time pipeline for free with Estuary

Have questions or want to share how your team uses pipelines? Get in touch—we’d love to hear from you.

FAQs

What is the difference between ETL and ELT?

What’s the difference between batch and real-time pipelines?

Why are data pipelines important for business?

Is Estuary a real-time data pipeline platform?

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.