Database vs data warehouse vs data lake: Key differences and usage

We hear these terms used a lot, and to the uninitiated, they can sometimes seem interchangeable. So, what’s the difference between these types of data storage systems?

Database, data warehouse, data lake.

We hear these terms used a lot, and to the uninitiated, they can sometimes seem interchangeable. So, what’s the difference between these types of data storage systems? When does that difference matter? And most importantly, how can we use each storage type in smart data architecture?

Types of data storage systems

The most commonly used (and discussed) data storage types are defined as follows:

- A database is any collection of data stored in a computer system, which is designed to make data accessible.

- A data warehouse is a specific type of database (or group of databases) architected for analytical use.

- A data lake is a repository that stores structured and unstructured data in its native format, often in large volumes.

Now, let’s compare these storage types to understand them better and address some common points of confusion.

Database vs data warehouse

Databases have been around for decades: as long as businesses have been storing data electronically. As mentioned above, a database is technically any collection of structured data, which is why data warehouses fall under this umbrella.

XML, CSV, and spreadsheets are also types of databases. But when we use the term “database” today in the context of enterprise data architecture, we’re usually referring to a row-based, relational database (RBDMS). For the remainder of this article, that’s what we’ll be referring to when we say “database.”

Databases and data warehouses both store data in tables composed of rows and columns. Both can generally be queried with SQL, use keys, and have views. The difference between the two is how the rows and columns are treated and indexed. This relates to the type of data processing they’re designed for, and by extension, the type of use-case.

Databases prioritize the various rows in each table. A row represents a specific record; for instance, in a table of customer data, a given customer’s record would contain all the various fields for that one customer: name, address, age, etc. Databases are designed to power transactional systems, like point-of-sale, security, and financial systems — and to do so quite quickly. A term used to describe the structure of many of these databases is OLTP, or online transactional processing.

Databases are a familiar data storage method. Most of the time, they have been implemented for years within organizations for legacy workflows that enable day-to-day operations with low latency.

Data warehouses, in contrast, are a newer type of database architecture that is designed to allow the user to view and analyze data from a variety of perspectives at a high scale. Data warehouses allow you to easily shift focus across different dimensions of your data, especially at scale, thanks to improved indexing. Using our previous example, you could conduct in-depth analytics to gain more powerful insights across a large collection of customer data.

This flexible, analytics-first design makes data warehouses the storage solution of choice for big-data analytics, BI, and the modern data stack more generally. Many BI and analytics tools are designed to easily integrate with popular data warehouses.

The technical specifications and precise architectures for data warehouses are complex and can vary. In general, data warehouses tend to follow the OLAP (online analytical processing) structure. This models data as a “cube,” which can be flipped, re-arranged, and sliced to address all sorts of analytical problems much more easily than a classic relational database. So if you hear the term “OLAP database,” this almost always refers to a warehouse.

This design is a tradeoff, however. OLAP introduces latency to a database, making it poorly suited for the speedy, transactional tasks for which OLTP databases are designed.

Adopting data warehouses in addition to traditional databases has been an adjustment for many enterprises. However, it’s a necessity, especially as data volumes have grown exponentially and the demands of data analysis have direct business implications. Both are crucial to operating common business processes.

Neither databases nor data warehouses are by definition on-premise or in the cloud, however, you’re more likely to see an on-prem database, and most data warehouses are cloud-based.

Data warehouse vs data lake.

Along with data warehouses, data lakes are a newer type of data storage you’ll run into once you begin to modernize your data stack.

Data warehouses are highly structured and typically require data to fit into a schema. This requires all incoming data to be of the same type and format, and transformations are often needed to achieve this. Data lakes, on the other hand, are unstructured data repositories. They can store data in a variety of formats — even text, images, and video.

Generally speaking, data lakes allow you the freedom to store a huge amount of data quickly and without restriction. The downside is that data lakes are inherently disorganized, and if you don’t have the right infrastructure in place to extract your data and put it to work, it’ll get mired in what is loving referred to as a “data swamp.”

Data warehouses store clean, organized data that’s ready for analysis, but are often limited by time lag and storage costs.

Considered in a vacuum, the most obvious use-cases for a data lake are data exploration or training machine learning models. Data warehouse, as we’ve discussed, typically power analytical and BI tools. But in the context of a broader data architecture, this gets more interesting.

Real-life implications of data storage methods

It’s not unlikely that a modern, data-driven enterprise will use all three types of data storage: transactional databases, data warehouses, and data lakes. It’s not necessary to choose just one; in fact, the diverse usage of data often requires all three to power a large system.

As an example, an e-commerce company might use a database to power their online store directly: managing inventory, customer carts, and more. It might also have a data warehouse to feed the analytic tools they use for marketing and financial decisions. Finally, a data lake could provide a staging area for data in motion between various systems.

It’s also important to point out that data warehouses, data lakes, and databases are just three data storage architectures that are popular and relatively easy to understand. In reality, there are many more types of data storage systems designed to power the varied demands of modern analytics. Streaming databases are a great example that we didn’t cover today; you can learn about them here.

The challenge, of course, is making sure your different data storage systems remain in sync. There are various ways to do this, but we’ll use Estuary’s platform, Flow, as an example.

How data storage works using Estuary

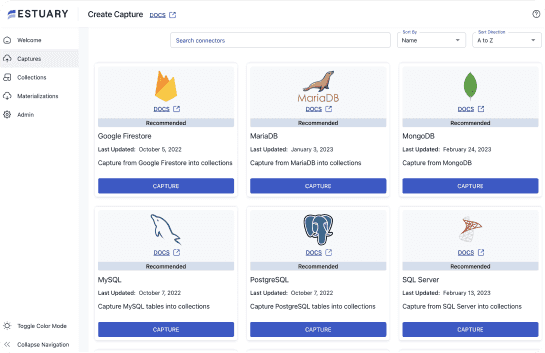

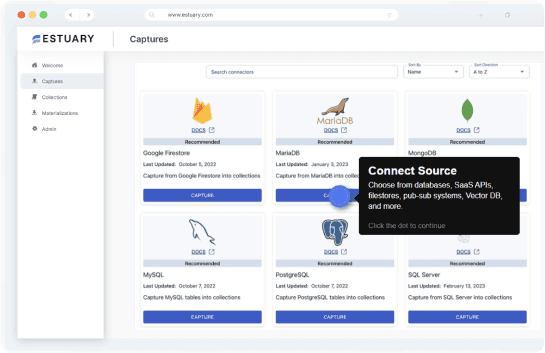

Flow is a data operations platform that integrates systems through a central data pipeline. As a Flow user, you’d already have your various systems for analysis and storage, including databases and data warehouses, as necessary. In order to keep these different storage systems in sync, Flow must load new data into them as it is collected.

Under the hood, the Flow data pipeline is based on a data lake. This can serve two purposes. First, it allows data to pass through easily and be directed to different storage endpoints in near-real-time. It also backfills data from these storage endpoints, which helps integrate historical data across your systems. Secondly, you can rely on Flow’s data lake as a storage backbone without worrying about it becoming a “data swamp.” This is because Flow automatically manages it and keeps it organized. Different schemas are applied to data collections as needed as they move through the pipeline.

In this example, the data lake doesn’t need to be your final storage solution, but it’s necessary to sync and optimize the other storage systems that power important workflows, whether analytical or transactional.

Of course, this isn’t the only possible data architecture, but it serves to demonstrate how being intentional and creative with the variety of data storage solutions available can help optimize your data stack. Transactional databases, data warehouses, and data lakes are distinct from one another, and when used properly, are powerful and effective forms of data storage.

If you’d like to learn more about Flow’s architecture, check out our docs or dig into the open-source code.

Rather see it in action? Try Flow for free today.

About the author

Olivia Iannone is a technical writer who creates clear, accessible content on data engineering, real-time systems, and developer tools.