“Data pipeline architecture” may sound like one of those ambiguous buzzwords tossed around in data engineering circles, but don’t underestimate its importance.

Well-designed architecture is what separates fragile, slow, and disorganized data pipelines from those that are scalable, efficient, and resilient. It’s the underlying blueprint that ensures your data flows smoothly from source to destination, gets transformed appropriately, and supports decision-making with speed and accuracy.

In 2025, as data volumes grow at unprecedented rates and AI-powered systems rely increasingly on real-time data, the role of robust data pipeline architecture has never been more critical. Mistakes in planning can lead to system-wide outages, compliance failures, poor analytics, and spiraling costs.

The good news? With the right knowledge and strategic design, you can build pipelines that serve not just today’s use cases but scale effortlessly for tomorrow’s.

In this comprehensive guide, you’ll learn:

- What data pipeline architecture is and why it matters more than ever in the modern data stack.

- The most common architecture patterns and processing approaches — from ETL vs ELT, to batch vs real-time, to data mesh vs monolith.

- Key technical and organizational considerations for building secure, cost-efficient, and future-proof pipelines in 2025.

By the end, you’ll understand the components, trade-offs, and strategies required to build and maintain a strong data infrastructure — whether you’re a data engineer, a CTO, or a business stakeholder looking to make sense of the complexity.

What is Data Pipeline Architecture?

At its core, a data pipeline is an automated process that moves and transforms data from one system to another, enabling insights, applications, and operations across the organization.

But there’s more than one way to build a pipeline. That’s where data pipeline architecture comes in.

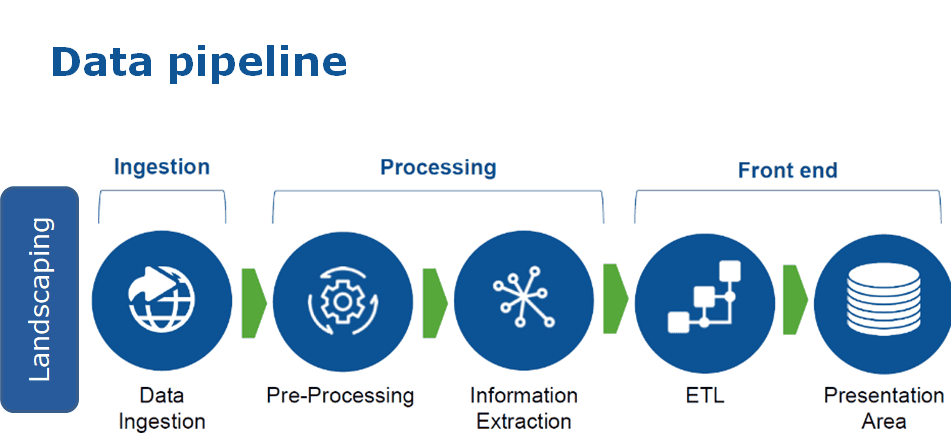

Definition: Data pipeline architecture refers to the design, layout, and orchestration of components that extract, process, transform, and deliver data across source systems and destinations.

This architecture governs how data is:

- Extracted from sources like databases, APIs, or IoT sensors,

- Transformed via cleaning, normalization, enrichment, and formatting,

- Loaded or served to destinations such as warehouses, lakehouses, dashboards, or machine learning models.

Why It Matters in 2025

Data-driven organizations are no longer managing just structured tables or logs — they’re ingesting real-time clickstreams, video metadata, machine telemetry, unstructured text, and more.

And these systems must support:

- Streaming analytics for immediate decisions,

- AI/ML pipelines with high-throughput requirements,

- Regulatory audits and data privacy standards (like GDPR, CCPA),

- Cross-cloud data sharing without duplication (a fast-growing trend via Snowflake’s no-copy sharing or AWS zero-ETL).

Without sound architecture, data pipelines can become:

- Unreliable: prone to breakage when schemas evolve.

- Unscalable: collapsing under volume or velocity.

- Expensive: racking up cloud bills due to inefficient transformations.

- Siloed: keeping teams from working on shared, high-quality data.

A strong pipeline architecture ensures that:

- Your data flows reliably through the system with observability and recovery.

- It’s modular and scalable to handle growing volume.

- It’s compliant and secure by design.

- It supports both batch and real-time use cases flexibly.

Common Data Pipeline Architecture Patterns

When building data pipelines, you're not just solving a single problem. You're making a series of interconnected decisions that define how data is moved, processed, accessed, and scaled across your organization.

These decisions fall into three major categories:

- ETL vs ELT — When and where transformation happens

- Batch vs Real-Time — The timing and latency of data processing

- Siloed vs Monolith vs Mesh — The organizational shape of your data ecosystem

Let’s break each of these down in detail.

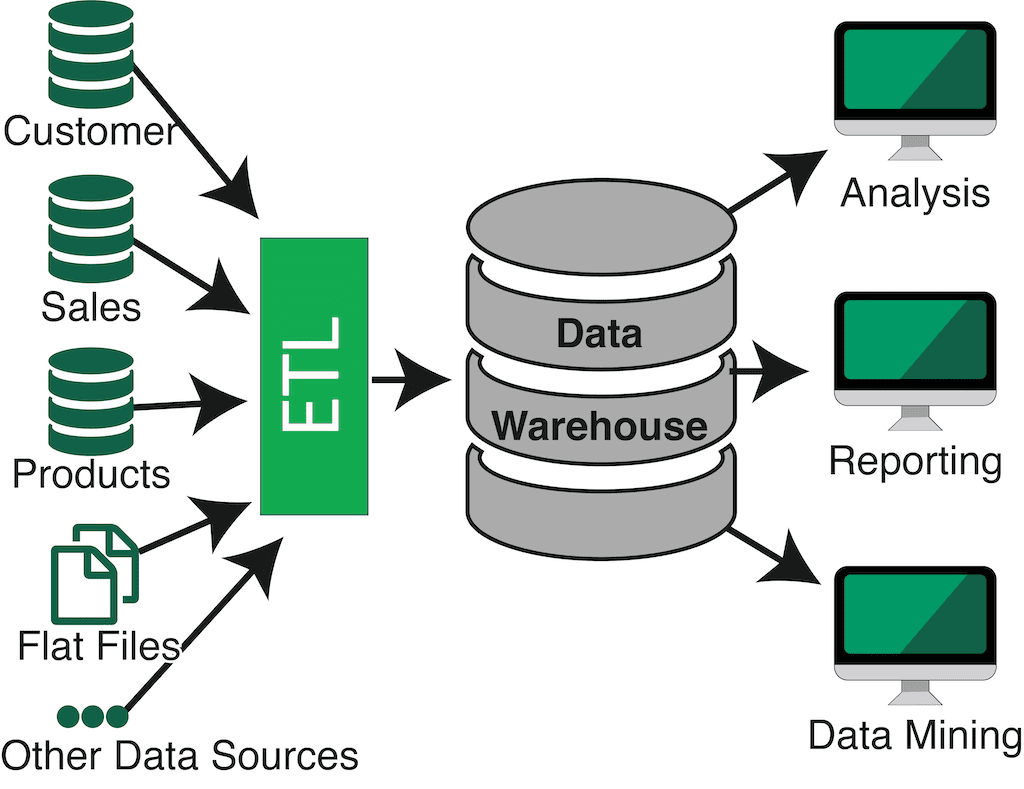

1. ETL vs ELT: When Should Data Be Transformed?

Every pipeline must transform raw data into something usable. But should transformation happen before loading it into your target system, or after?

Model | Flow | Best For | Examples |

| ETL (Extract → Transform → Load) | Data is cleaned and reshaped before being loaded | Operational tools or systems that enforce a strict schema | Loading data into Salesforce, Stripe, or MySQL |

| ELT (Extract → Load → Transform) | Raw data is first ingested, then transformed inside the destination system | Analytical tools that can handle raw data formats and flexible compute | Loading into Snowflake, BigQuery, or Databricks |

What to Consider:

- ETL is ideal when the target system doesn’t allow malformed or partially cleaned data, like SaaS apps or transactional systems.

- ELT takes advantage of modern cloud platforms that separate storage from compute, allowing fast, on-demand transformation using SQL or Spark.

- Hybrid models are increasingly popular, where minimal cleansing (like deduplication or type enforcement) happens early, and full transformation happens downstream.

Learn more: ETL vs ELT

Emerging Trend: Zero-ETL

Tools like:

- AWS Aurora Zero-ETL to Redshift

- Snowflake’s no-copy data sharing

- Google’s AlloyDB integrations

…are enabling use cases where no data movement or transformation is needed. You query transactional data directly in analytics platforms, eliminating the pipeline altogether for specific internal workflows.

Takeaway:

Choose ETL for structured, operational needs. Choose ELT (or zero-ETL) for scale, flexibility, and analytics agility.

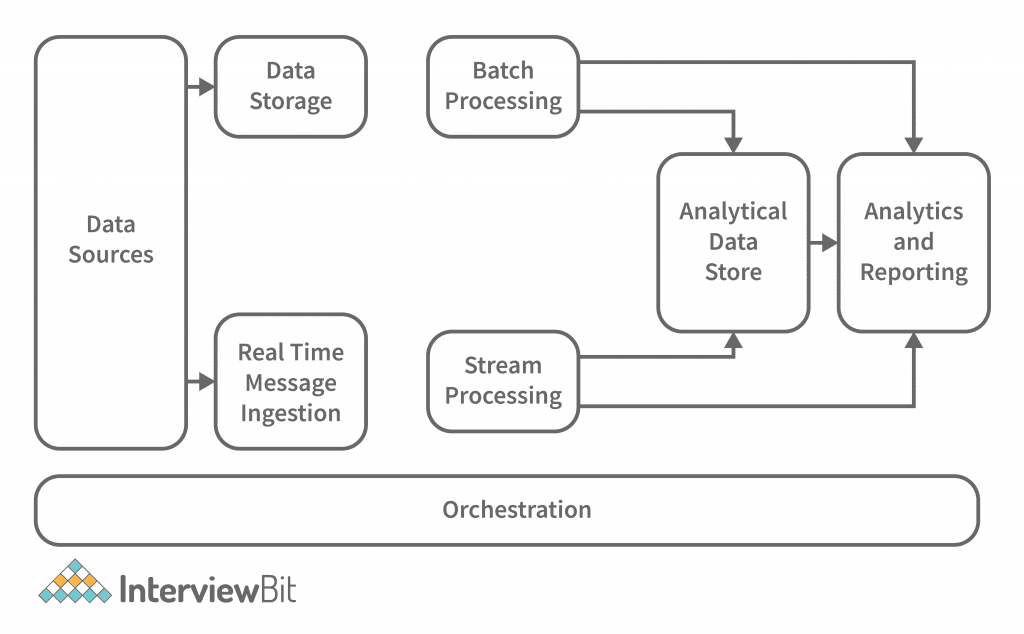

2. Batch vs Real-Time: How Fast Should Data Move?

This pattern defines the cadence of data movement in your pipelines — either in discrete chunks or in a continuous stream.

Pipeline Type | Latency | Works Best For | Examples |

| Batch | Minutes to hours | Historical analysis, periodic reporting, ML training | Daily sales reports, monthly marketing funnel metrics |

| Real-Time | Seconds or less | Alerts, personalization, system monitoring | Fraud detection, stock price updates, operational dashboards |

Batch Pros:

- Easier to manage, debug, and build with traditional tools.

- Less infrastructure overhead for lower-volume use cases.

Real-Time Pros:

- Enables low-latency decisions in product experiences.

- Reduces pipeline lag, allowing for near-instant visibility and action.

What to Watch Out For:

- Real-time systems (Kafka, Flink, Estuary) are powerful, but architectural missteps can become expensive. You need proper handling for:

- Backpressure

- Schema evolution

- Reprocessing failures

- Streaming isn’t always better — for infrequent or heavy workloads, batch may be more cost-effective and reliable.

Tip: Most modern data platforms support hybrid architectures, with real-time ingestion and batched transformation or vice versa. Tailor your approach to business goals and latency needs.

3. Siloed, Monolith, or Mesh: Organizing Data at Scale

Beyond individual pipelines, your organization’s data architecture model determines how data is owned, shared, and governed. Here are the three dominant approaches:

A. Siloed Domains (Legacy Model)

Each department or team builds its own pipelines and data storage.

- ✅ Easy to set up early on

- ❌ Leads to chaos: conflicting metrics, duplicated data, no governance

- ❌ Sharing data between departments becomes error-prone

Example: Marketing pulls campaign data into a CRM; Finance separately stores transactional records; no one sees a unified view of the customer.

B. Data Monolith (Centralized)

A single massive data warehouse or lake stores all organizational data.

- ✅ Enables centralized control, governance, and consistency

- ✅ Standardizes access and semantics

- ❌ Creates bottlenecks: teams depend on central data engineers

- ❌ Doesn’t scale well for multi-domain, multi-team organizations

Example: All departments query Snowflake via dbt models maintained by a central data team.

C. Data Mesh (Modern, Scalable Approach)

A federated architecture where domain teams own their pipelines and data products, but adhere to shared rules and standards.

- ✅ Encourages cross-functional ownership

- ✅ Balances autonomy and interoperability

- ✅ Promotes scalable governance and observability

- ❌ Requires upfront investment in platform, tooling, and team culture

Example: Product, Finance, and Support each maintain domain-specific pipelines, but all follow global policies for naming, versioning, and schema validation.

Platforms Enabling Mesh Today:

Monte Carlo (data observability), Atlan (data cataloging), Collibra (governance), Estuary (domain-driven stream pipelines)

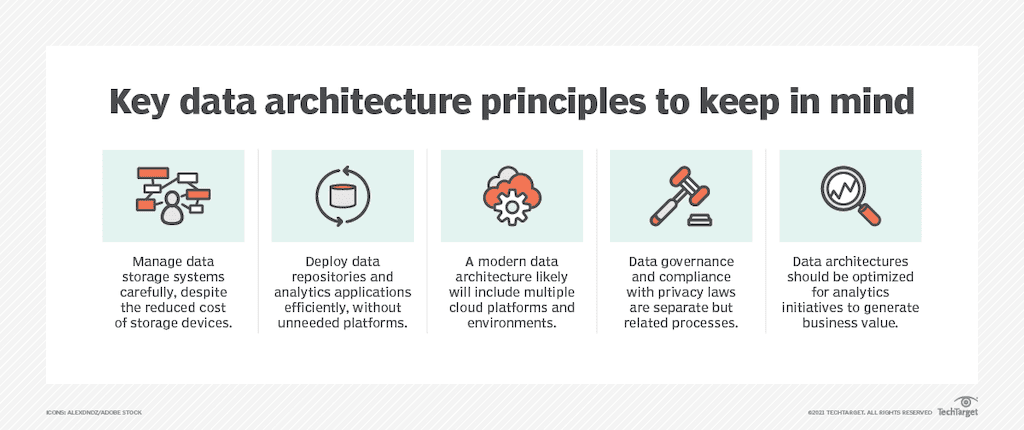

Considerations For Data Pipeline Architecture

Designing a data pipeline architecture is more than just choosing between batch or streaming. The real success lies in the foundational decisions that make pipelines cost-efficient, secure, resilient, and scalable as your data needs evolve.

Below are the critical areas to focus on — many of which are easy to overlook early in the design process:

1. Cost Optimization: Cloud Storage Isn’t Free

Cloud storage may appear inexpensive at first glance, but costs can scale quickly with high data volumes, repeated transformations, and redundant loads.

Best Practices:

- Minimize unnecessary copies of data across environments.

- Use efficient formats like Parquet, Avro, or Iceberg for analytics workloads.

- Be cautious with loading raw data into high-cost systems like Snowflake or BigQuery without first filtering or transforming it.

- Implement data lifecycle policies — e.g., auto-archiving old logs, compressing historical records, or deleting temporary staging data.

Tip: Understand how your vendors bill for data scanned, rows processed, and storage time, not just storage volume.

2. Encryption & Data Security by Design

Security is non-negotiable. Since pipelines handle data in motion, any weak link can introduce vulnerabilities.

Key Security Considerations:

- Encryption in motion: Use TLS/SSL for secure data transit between systems.

- Encryption at rest: Ensure source and destination systems encrypt stored data.

- Secrets management: Use a secure vault or secrets manager (e.g., AWS Secrets Manager, HashiCorp Vault) to handle credentials, not hardcoded configs.

- Zero-trust networking: Especially for cross-cloud pipelines, limit open ports and require token- or certificate-based authentication.

If your pipeline interacts with PII, health data, or financial records, align your security practices with compliance regulations upfront.

3. Compliance & Governance: Think Globally, Plan Ahead

If your product or platform handles customer data, you’re subject to growing privacy laws — and it’s no longer just about GDPR or CCPA. Brazil’s LGPD, India’s DPDP Act, and evolving US state laws are tightening expectations.

Architectural Planning Tips:

- Data lineage: Track where each record originates and how it has been transformed.

- Data minimization: Avoid over-collecting — pipeline logic should enforce this.

- Geolocation awareness: Keep customer data in the correct geographic region when required.

- Build with auditability in mind: Ensure every access or transformation is logged.

Bringing legal and compliance teams into the architecture planning process can prevent costly retrofits later.

4. Scalability & Performance: Design for Growth

Data volumes will increase. So will the number of systems you need to integrate. If your pipelines aren’t built to scale, you’ll hit limits fast.

Performance Best Practices:

- Use distributed processing (e.g., Spark, Flink, or scalable platforms like Estuary) to break up workload bottlenecks.

- Containerize your connectors and workers with tools like Docker or Kubernetes to scale horizontally.

- Use auto-scaling infrastructure (e.g., AWS Fargate, Google Cloud Run) to handle traffic spikes without over-provisioning.

- Invest in observability: Metrics, logs, and tracing tools like OpenTelemetry or Prometheus are essential for diagnosing issues before they affect users.

Example: A pipeline that works fine at 1 million events/day may break entirely at 10 million — unless built with scalable processing and backpressure handling in mind.

5. Maintainability & Extensibility

A pipeline should be easy to extend, monitor, and debug — not a black box that only one engineer understands.

Make your pipelines maintainable by:

- Designing them as modular, reusable components.

- Using declarative configuration (e.g., YAML specs) instead of ad-hoc scripts.

- Including automated CI/CD testing for schema validation, broken integrations, and logical regressions.

- Investing in cataloging and documentation for every pipeline entity.

Also, make sure to version your data pipelines, not just your code. This allows rollbacks and clear tracking of changes in logic or structure.

Conclusion: Designing Pipelines That Last

In a world where data volume, velocity, and variety continue to surge, the architecture of your data pipelines is no longer a backend concern — it’s a strategic differentiator.

Whether you're delivering real-time personalization, training machine learning models, ensuring regulatory compliance, or powering internal analytics, the way your pipelines are architected will shape your organization’s speed, reliability, and agility.

Here’s what to remember as you design or upgrade your data pipeline architecture:

- Pipeline design isn’t one-size-fits-all. The right architecture depends on your latency needs, operational goals, team structure, and tooling maturity.

- Embrace ELT and streaming where flexibility and scale are critical — but don’t shy away from ETL or batch where predictability and control matter.

- Your broader architecture model — whether siloed, centralized, or federated — will determine how effectively your teams can collaborate and share data.

- Prioritize security, cost-efficiency, governance, and observability. These non-functional requirements often determine long-term success more than technical elegance.

- Be future-ready: Choose tools and platforms that offer modularity, automation, and cloud-native scalability. Don’t lock yourself into brittle pipelines that can’t grow with you.

Ready to Build?

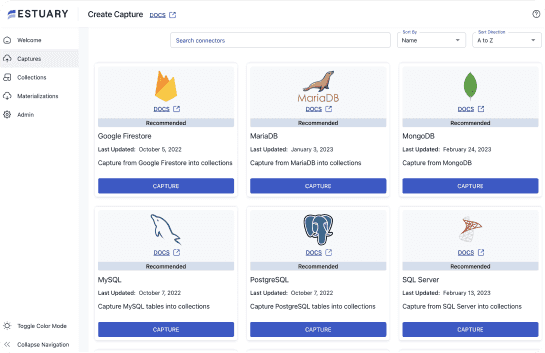

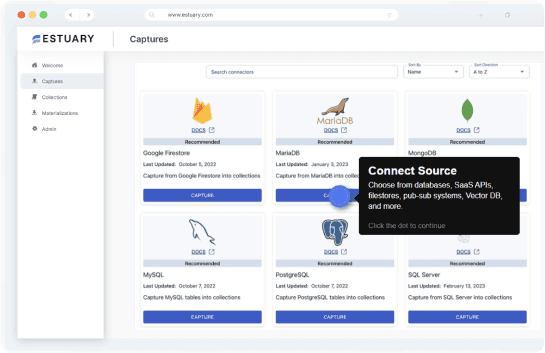

If your data architecture roadmap includes real-time pipelines, consider using a platform like Estuary to eliminate complexity and accelerate time-to-value. Flow enables:

- Low-latency streaming across diverse systems

- Schema-aware transformations and validations

- Scalable, production-grade infrastructure with zero ops burden

You can try Estuary for free or explore more guides and best practices throughout our blog.

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.