Why ELT won’t Fix Your Data Problems

If we’re not careful, the modern data stack and ELT can cause new incarnations of problems that have been plaguing us for years.

Improving your data infrastructure and data culture takes more than a flashy pipeline.

History likes to repeat itself.

To put it another way: we humans have a talent for creating the same problems for ourselves over and over, disguised as something new.

Data stacks and data pipelines are no exception to this rule.

Today’s data infrastructure landscape is dominated by the “modern data stack” (ie, a data stack centered around a cloud data warehouse) and ELT pipelines.

This paradigm is certainly a step up from older models, but it doesn’t save us from old problems. If we’re not careful, the modern data stack and ELT can cause new incarnations of problems that have been plaguing us for years. Namely:

- An engineering bottleneck in the data lifecycle.

- A disconnect between engineers and data consumers.

- A general mistrust of data.

These problems are avoidable, especially with today’s technology. So, let’s dive into where they really come from, and how you can actually prevent them.

ETL and ELT: a refresher

By now, you’re probably sick of reading comparisons of ETL and ELT data pipelines. But just in case you’re not, here’s one more!

Before data can be operationalized, or put to work meeting business goals, it must be collected and prepared. Data must be extracted from an external source, transformed into the correct shape, and loaded into a storage system.

Data transformation may happen either before or after the data arrives in storage. In simplistic terms, that’s the difference between ETL and ELT pipelines. Though in reality, it’s not such a simple division.

Today, we tend to talk about ELT pipelines as cutting-edge solutions compared to ETL. The oft-cited reason is that by saving transformation for later:

- Data can get to storage quicker.

- We have more flexibility to decide what to do with untransformed data for different use cases.

Neither of these things is necessarily true. We’ll get to why in a minute.

First, I want to touch on why ETL has come to be seen negatively.

The problem with ETL

There’s nothing inherently bad about transformation coming before loading.

The term “ETL” has come to mean more than that. It harkens back to legacy infrastructure that, in many cases, still causes problems today. And it implies a bespoke, hand-coded engineering solution.

In the early days of data engineering, data use cases were generally less diverse and less critical to organizations’ daily operations. On a technical level, on-premise data storage put a cap on how much data a company could reasonably keep.

In light of these two factors, it made sense to hand-code an ETL pipeline for each use case. Data was heavily transformed and pared down prior to loading. This served the dual purpose of saving storage and preparing the data for its intended use.

ETL pipelines weren’t hard to code, but they proved problematic to maintain at scale. And because they’re often baked into an organization’s data foundation, they can be hard to move away from. Today, it’s not uncommon to find enterprises whose data architecture still hinges on thousands of individual, daisy-chained ETL jobs.

The job of the data engineer became to write seemingly endless ETL code and put out fires when things broke. With an ETL-based architecture, it’s hard to have a big enough engineering team to handle this workload, and it’s easy to fall behind as data quantities inevitably scale.

The problem with ETL is that it’s inefficient at scale, which leads to a bottleneck of engineering resources.

This problem shows up in multiple ways:

- Need for constant ad-hoc engineering to handle a variety of ever-changing use cases

- Engineering burnout and difficulty hiring enough engineers to handle the workload

- Disconnect between engineers (who understand the technical problems) and data consumers (who understand the business needs and outcomes)

- Mistrust of data due to inconsistency of results

As data quantity has exploded in recent years, these problems have gone from inconveniences to show stoppers.

Enter the modern data stack.

ELT and the modern data stack

As we mentioned previously, the linchpin of the modern data stack is the cloud data warehouse. The rise of the cloud data warehouse encourages ELT pipelines in two major ways:

- Virtually unlimited, relatively cheap scaling in the cloud removes storage limitations

- Data transformation platforms (a popular example is dbt) are designed to transform data in the warehouse

Factor in the growing frustration with bespoke ETL, and it makes sense that the pendulum swung dramatically in favor of ELT in recent years.

Unfettered ELT seems like a great idea at a glance. We can just put All The Data™ into the warehouse and transform it when we need it, for any purpose! What could possibly go wrong?

ELT problems and the new engineering bottleneck

Here’s what can go wrong: even with the modern data stack and advanced ELT technology, many organizations still find themselves with an engineering bottleneck. It’s just at a different point in the data lifecycle.

When it’s possible to indiscriminately pipe data into a warehouse, inevitably, that’s what people will do. Especially if they’re not the same people who will have to clean up the data later. This is not malicious; it’s simply due to the fact that data producers, consumers, and engineers all have different priorities and are thinking about data in different ways.

Today, the engineers involved aren’t just data engineers. We also have analytics engineers. The concept of analytics engineering was coined by dbt after the popularity of their analytics platform created a new professional specialty. Platforms aside, this new wave of professionals focuses less on the infrastructure itself and more on transforming and preparing data that consumers can operationalize (in other words, preparing data products). Because job titles can get convoluted, I’ll just be saying “engineers” from here on out.

One way to look at this is: many engineers are dedicated to facilitating the “T” in ELT. However, an unending flood of raw data can transform the data warehouse into a data swamp, and engineers can barely keep their heads above water.

Data consumers require transformed data to meet their business goals. But they’re unable to access it without the help of engineers, because it’s sitting in the warehouse in an unusable state. Depending on what was piped into the warehouse, getting the data into shape can be difficult in all sorts of ways.

This paradigm can lead to problems including:

- Need for constant ad-hoc engineering to handle a variety of ever-changing use cases

- Engineering burnout and difficulty hiring enough engineers to handle the workload

- Disconnect between engineers (who understand the technical problems) and data consumers (who understand the business needs and outcomes)

- Mistrust of data due to inconsistency of results

Sound familiar?

The importance of data governance

Ultimately, the modern data stack isn’t a magic bullet. To determine whether it’s valuable, you must look at it in terms of business outcomes.

This is true of any data infrastructure.

It doesn’t matter how fast data gets into the warehouse, or how much is there. All that matters is how much business value you can derive from operationalized data: that is, transformed data on the other side of the warehouse that’s been put to work.

The best way to make sure operational data exits the warehouse is to ensure that the data entering the warehouse is thoughtfully curated. This doesn’t mean applying full-blown, use-case-specific transformations, but rather, performing basic cleanup and modeling during ingestion.

This sets engineering teams up for success.

There’s no way to get around data governance and modeling. Fortunately, there are many ways to approach this task.

Here are a few things that can help.

Follow new conceptual guidelines, like data mesh.

Data mesh doesn’t provide a script for architecture and governance. However, it does offer helpful frameworks to fine-tune your organization’s guidelines in the context of a modern data stack. Data mesh places a heavy emphasis on the human aspects of how data moves through your organization, not just the technical side of infrastructure.

For example, giving domain-driven teams ownership over the full lifecycle of the data can help stakeholders see the bigger picture. Data producers will be less likely to indiscriminately dump data into the warehouse, engineers will be more in tune with how the data needs to be used, and consumers can develop more trust in their data product.

Build quality-control into your pipelines

Your inbound data pipeline is in the perfect position to gatekeep data quality — use this to your advantage!

For a given data source, your pipeline should be watching the shape of your data; for example, by adhering to a schema. Ideally, it should apply basic transformations to data that doesn’t fit the schema before landing it in the warehouse.

If the pipeline is asked to bring in messy data that it can’t automatically clean up, it should halt, or at least alert you to the problem.

And yes, this can mean that “true ELT” never exists, because transforms occur both before and after the warehouse.

It’s possible to accomplish this relatively easily without sacrificing speed. And because the pre-loading transforms are basic, they shouldn’t limit what you’re able to do with the data. On the contrary, starting with clean data in the warehouse offers more flexibility.

All of this is thanks to the huge proliferation of data platforms on the market today. You might use a tool like Airflow to orchestrate and monitor your pipelines. Or, you might set up an observability platform to make your existing ELT pipelines more transparent.

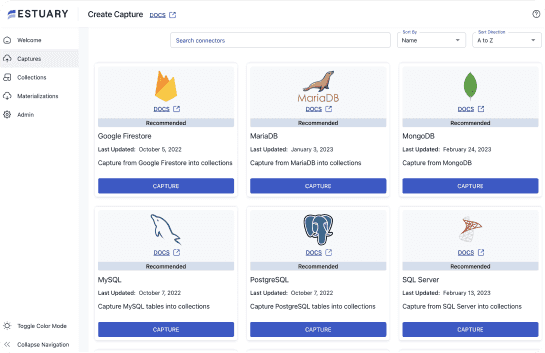

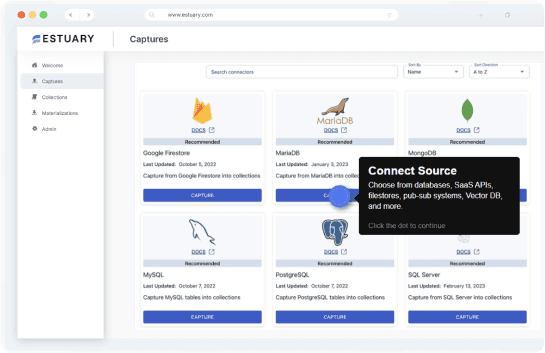

At Estuary, we’re offering our platform, Flow, as an alternative solution.

Flow is a real-time data operations platform designed to create scalable data pipelines that connect all the components of your stack. It combines the function of an ELT platform with data orchestration features.

Data that comes through Flow must adhere to a schema, or be transformed on the fly. Live reporting and monitoring is available, and the platform is architectured to recover from failure.

Beyond ELT and the modern data stack

Managing data and stakeholders within a company will always require strategic thinking and governance.

Newer paradigms don’t change that fact, though it’s tempting to think so. The reality is that the problems that can plague ELT and the modern data stack are quite similar to those that make ETL unsustainable.

Luckily, today there are plenty of tools available to implement effective governance and data modeling at scale. Using them effectively, while challenging, is very much possible.

Want to try Flow? You can get started for free here.

About the author

Olivia Iannone is a technical writer who creates clear, accessible content on data engineering, real-time systems, and developer tools.