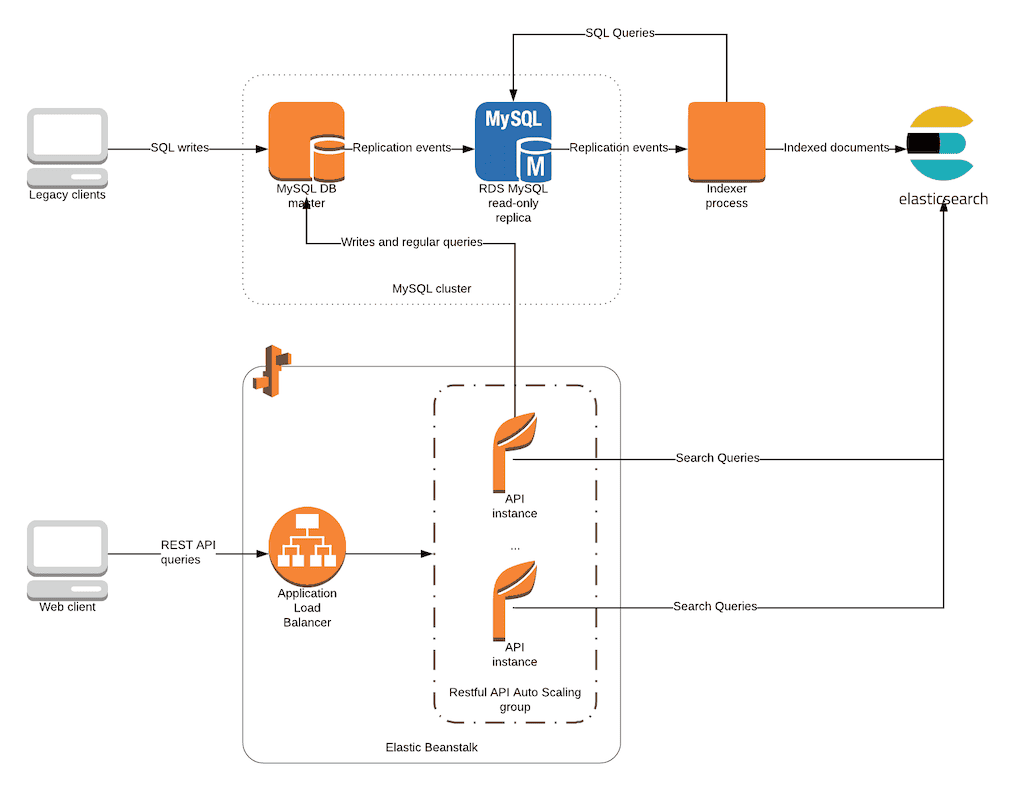

To power fast search, analytics, or observability on top of your MySQL data, you need a way to move that data into Elasticsearch efficiently and in real time. But building a reliable pipeline between the two can be complex, especially if you’re relying on custom scripts or tools that don’t support schema changes or deletions.

In this guide, you’ll learn how to sync MySQL to Elasticsearch using two proven methods:

- A no-code, real-time pipeline using Estuary, powered by Change Data Capture (CDC)

- A manual approach using Logstash with JDBC, based on Elastic’s documented method

Whether you're building a full-text search feature, real-time dashboards, or log analytics, this tutorial will help you set up a robust MySQL to Elasticsearch integration in just a few minutes.

Skip the complexity. Set up your MySQL to Elasticsearch pipeline in real time with Estuary →

What Is MySQL?

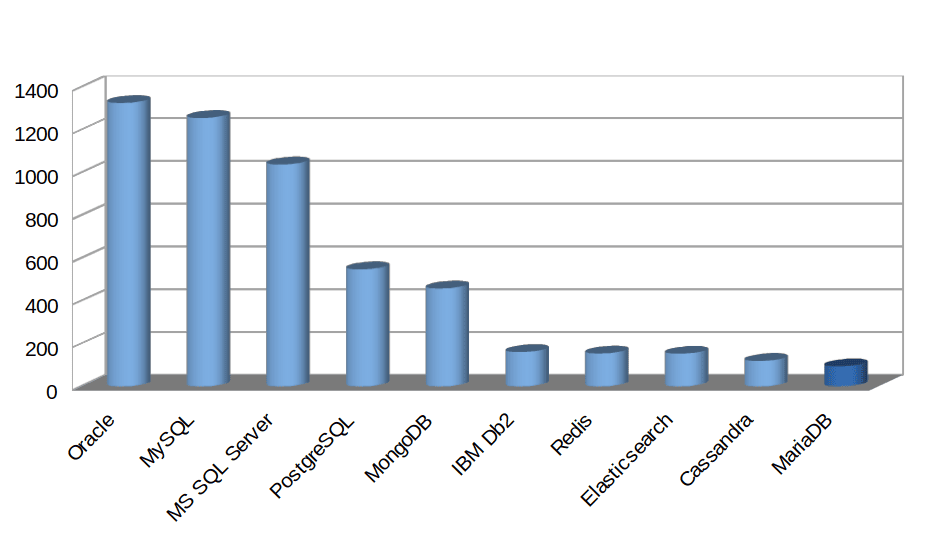

MySQL is one of the world’s most widely used open-source relational database management systems (RDBMS). It’s the backbone of countless web and enterprise applications — from eCommerce platforms to SaaS tools and content management systems.

MySQL is built on SQL (Structured Query Language) and offers a highly stable, performant, and well-supported database layer. It’s backed by Oracle and maintained through regular updates.

MySQL is ideal for use cases that require:

- ACID-compliant transactions

- Structured data relationships

- CRUD operations at scale

- High availability through replication or clustering

It's a reliable primary data store — but not built for fast, flexible search at scale. That’s where Elasticsearch comes in.

What Is Elasticsearch?

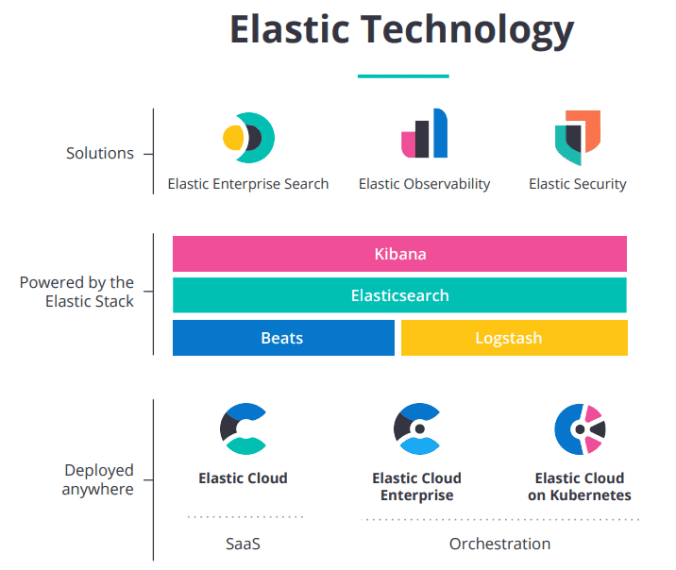

Elasticsearch is a distributed, open-source search and analytics engine designed for fast, scalable querying across all types of data — structured, semi-structured, unstructured, and geospatial. It’s built on Apache Lucene and forms the core of the Elastic Stack (formerly ELK Stack), which also includes Logstash, Kibana, and Beats.

Elasticsearch excels at full-text search, real-time indexing, and log analytics. It’s used by companies like GitHub, Cisco, and Citigroup to power search bars, monitor infrastructure, and surface insights from large volumes of streaming data.

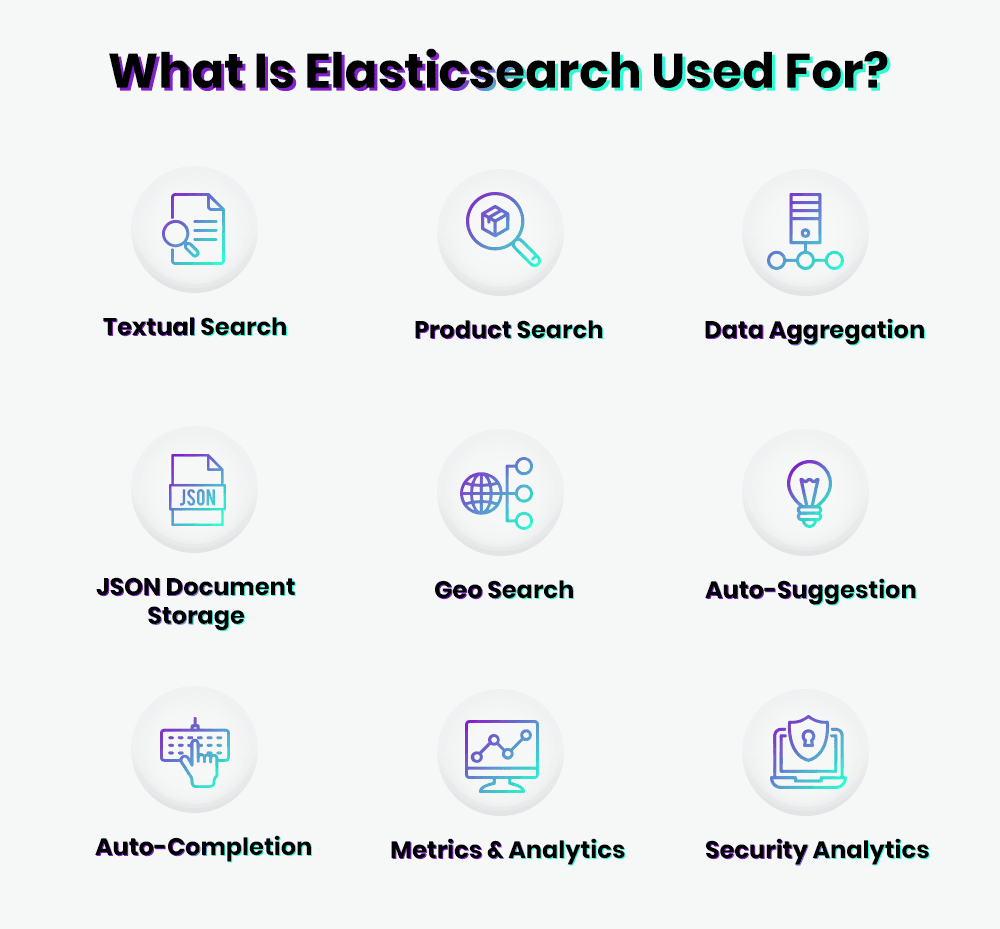

Common Use Cases

- Search bars and autocomplete for websites and apps

- Real-time log and metrics analysis (e.g., DevOps, observability)

- Security analytics and threat detection

- Business intelligence dashboards

- Application performance monitoring (APM)

- E-commerce product search and personalization

- Geospatial queries and location-based filtering

Elasticsearch supports official clients in popular languages like Python, Java, JavaScript (Node.js), Go, .NET, and PHP, making it a versatile choice for developers building search-driven applications.

Should You Use Elasticsearch Over MySQL?

While both MySQL and Elasticsearch store and query data, they’re built for fundamentally different purposes — and they work best when used together, not as replacements for each other.

- MySQL is your source of truth — a transactional RDBMS designed for structured data, relationships, and ACID-compliant operations.

- Elasticsearch is an analytics and search engine, optimized for full-text search, real-time indexing, and lightning-fast querying over massive datasets.

Why You Should Combine Them

Use MySQL to:

- Manage core application data

- Handle structured relationships (foreign keys, joins)

- Perform inserts, updates, deletes (CRUD operations)

Use Elasticsearch to:

- Enable fast, fuzzy, and complex searches

- Power real-time dashboards and analytics

- Index and query logs, metrics, and unstructured data

By syncing data from MySQL to Elasticsearch, you can:

- Retain MySQL as your reliable operational database

- Offload complex search and aggregation to Elasticsearch

- Maintain resilience — even if Elasticsearch goes down, your source data is intact

Why Replacing MySQL with Elasticsearch Doesn’t Work

- Elasticsearch isn’t built for transactional data or relational integrity

- Mapping complex schemas from MySQL to JSON docs can be messy

- Handling updates and deletes requires custom logic

- It’s difficult to enforce constraints like foreign keys or uniqueness

- Elasticsearch has limited support for multi-table relationships

The best approach: Use MySQL as your primary database and Elasticsearch as a high-performance, secondary search layer.

In the next section, we’ll explore two ways to sync data between the two platforms, including a real-time, no-code method using Estuary.

Method 1: Integrate MySQL and Elasticsearch with Estuary

If you're looking for a fast, reliable, and maintenance-free way to sync MySQL with Elasticsearch — without writing code — Estuary is the best solution.

Estuary uses log-based Change Data Capture (CDC) to stream inserts, updates, and deletes from MySQL to Elasticsearch in real time. It handles schema changes automatically and can be configured in just a few clicks through its web app.

"Estuary is 1000x times better than LogStash or Elastic Enterprise Data Ingestion Tool (for moving data from MySQL to Elastic)." - Pompato

Before You Start

Make sure you have:

- Access to a MySQL database (host, port, user)

- An Elasticsearch cluster (Cloud or self-hosted)

- A free Estuary account

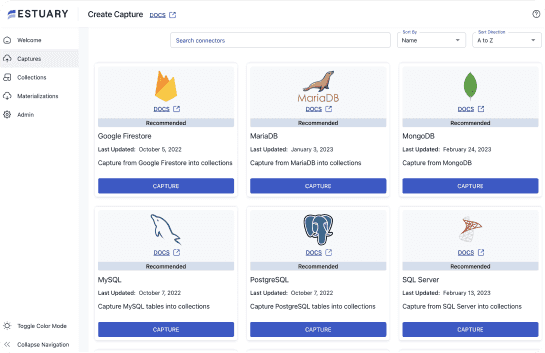

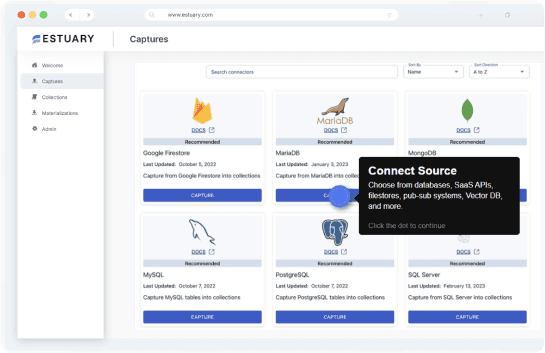

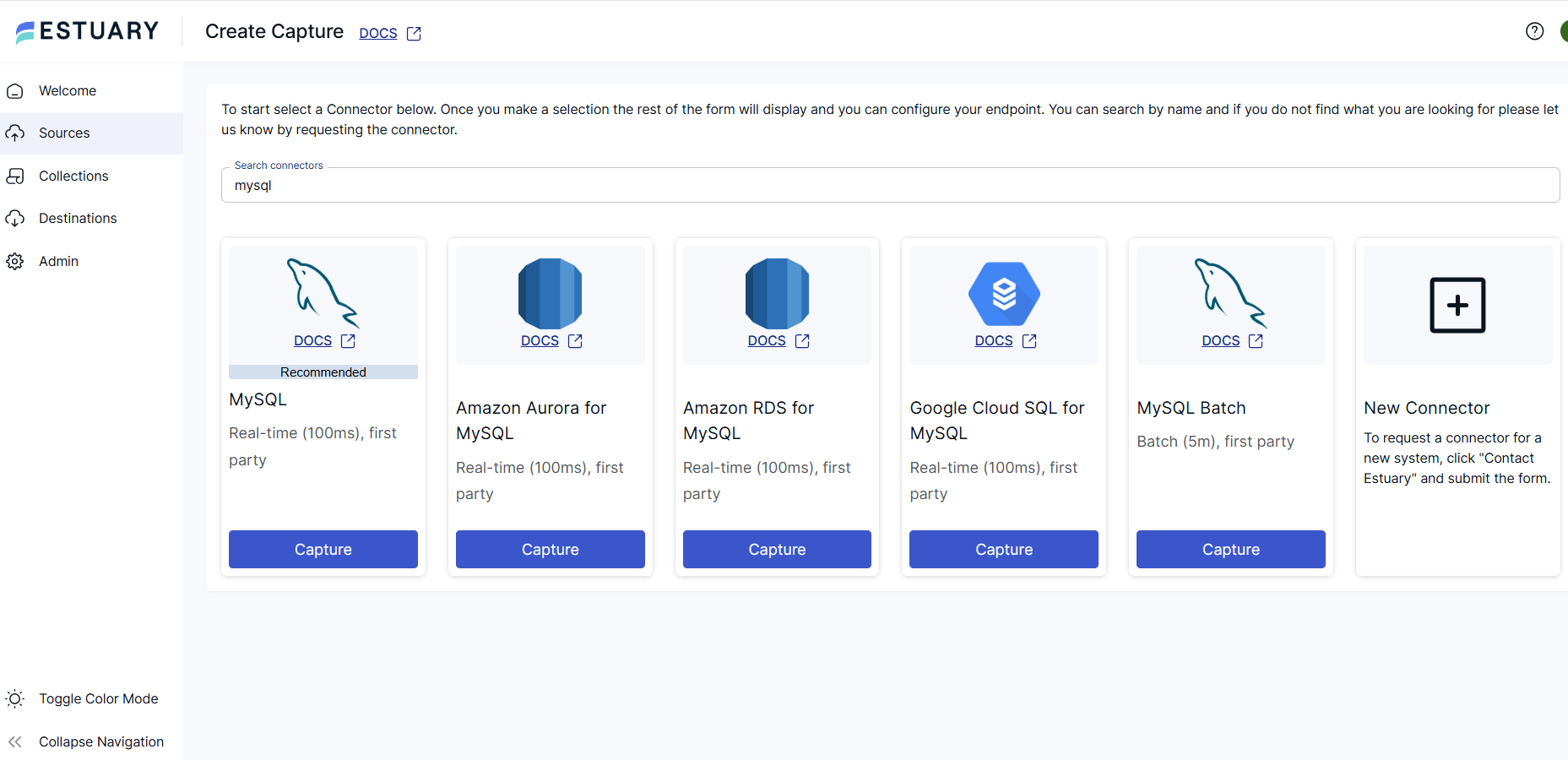

Step 1: Capture Data from MySQL

- Log in to Estuary

- Click Sources > + New Capture

- Select the MySQL connector

- Enter your MySQL credentials (host, database, user, password)

- Click Next — Estuary will list all available tables

- Select the tables you want to sync and click Save & Publish

Estuary will now create CDC-based data collections for each selected MySQL table.

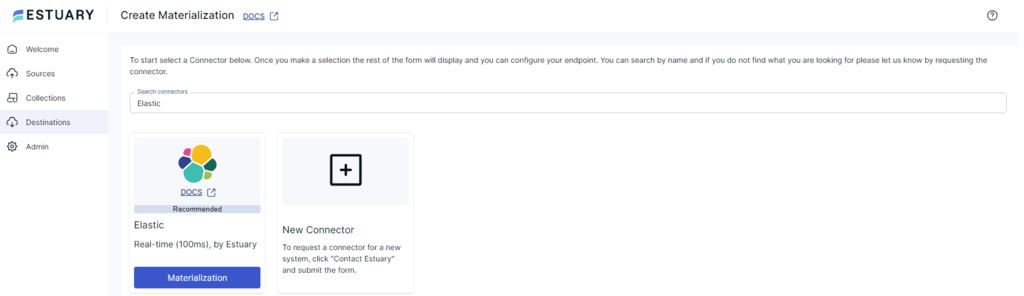

Step 2: Materialize to Elasticsearch

- Click Materialize Collections on the capture confirmation screen

- Choose the Elasticsearch connector

- Enter your Elastic cluster URL, username, and password

- Map each MySQL data collection to a target Elasticsearch index

- Optionally apply field overrides for type mapping

- Click Next > Save & Publish

All historical data from MySQL will be copied into Elasticsearch, and any new data changes will sync automatically within milliseconds.

Enterprise-Ready Features

- Secure Deployment Options: Estuary supports fully managed, private cloud, or BYOC deployments

- Automatic retries & checkpointing: Ensures data consistency and resilience

- Schema evolution: Adjusts to changes in MySQL schema without pipeline breakage

- Low-latency streaming: Ideal for powering dashboards, APIs, and search experiences

🔗 Try Estuary for free or reach out to our team for help with production pipelines and enterprise deployments.

For more help with this method, see the Estuary documentation on:

Method 2: Integrate MySQL and Elasticsearch with Elastic

Alternatively, you can use Elastic’s documented process using Logstash, which we’ve adapted below.

Note that this method is more challenging to implement, and won’t capture MySQL DELETE events. Though it’s an event-based integration, it’s not log-based change data capture; it instead relies on timestamps. Learn more about types of MySQL CDC here.

Gather Credentials

To specify the details of the connection, you’ll need your Cloud ID. Go to the Kibana main menu and select “Management” > “Integrations” > “View Deployment Details”.

To authenticate, you’ll use your Elasticsearch API key.

Get Logstash and the MySQL JDBC driver

- Download and install Logstash.

- Download and unpack the JDBC driver and take note of the driver’s location for later use.

Add Timestamps to MySQL Tables

For each table you plan to move to Elasticsearch, you’ll need a timestamp column with the last updated time.

This example adds a column called modification_time to the table my_table in the database my_db.

plaintextUSE my_db;

ALTER TABLE my_table

ADD modification_time TIMESTAMP NOT NULL DEFAULT CURRENT_TIMESTAMP ON UPDATE CURRENT_TIMESTAMP;Set up a Logstash pipeline with the JDBC input plugin

Next, you’ll need to set up a pipeline to ingest data from MySQL via the JDBC plugin

- Create a new file called

jdbc.confin<localpath>/logstash-7.12.0.

Paste the code below into the file to generate the Logstash pipeline, being sure to substitute your credentials and configuration details.

plaintextinput {

jdbc {

jdbc_driver_library => "<driverpath>/mysql-connector-java-<versionNumber>.jar"

jdbc_driver_class => "com.mysql.jdbc.Driver"

jdbc_connection_string => "jdbc:mysql://<MySQL host>:3306/es_db"

jdbc_user => "<myusername>"

jdbc_password => "<mypassword>"

jdbc_paging_enabled => true

tracking_column => "unix_ts_in_secs"

use_column_value => true

tracking_column_type => "numeric"

schedule => "*/5 * * * * *"

statement => "SELECT *, UNIX_TIMESTAMP(modification_time) AS unix_ts_in_secs FROM es_table WHERE (UNIX_TIMESTAMP(modification_time) > :sql_last_value AND modification_time < NOW()) ORDER BY modification_time ASC"

}

}

filter {

mutate {

copy => { "id" => "[@metadata][_id]"}

remove_field => ["id", "@version", "unix_ts_in_secs"]

}

}

output {

elasticsearch {

index => "rdbms_idx"

ilm_enabled => false

cloud_id => "<DeploymentName>:<ID>"

cloud_auth => "elastic:<Password>"

ssl => true

api_key => "<myAPIid:myAPIkey>"

}

}With the new configuration saved, launch Logstash:

plaintextbin/logstash -f jdbc.confThis method works for lightweight ingestion tasks, but doesn’t scale well for complex pipelines or production-grade applications.

✅ For log-based CDC, schema-aware syncing, and automated maintenance, Estuary is a faster and more reliable option.

Conclusion

While MySQL excels at storing structured, transactional data, Elasticsearch is purpose-built for lightning-fast search and real-time analytics. Connecting the two allows you to power advanced use cases — from full-text search to observability dashboards — without compromising your core database.

You have two main options:

- Estuary: A no-code, real-time pipeline with built-in support for CDC, schema changes, retries, and high-volume scalability. Ideal for production systems that need to stay in sync with low latency.

- Logstash with JDBC: A DIY option that works for simpler use cases, but lacks deletion tracking, real-time sync, and automation, and requires significant ongoing maintenance.

Our recommendation: use MySQL as your system of record, and Elasticsearch as a secondary index for search and analytics. Let a streaming tool like Estuary keep them perfectly in sync, so you can focus on building.

Next Steps

- Try Estuary for free →

- Contact our team for help with enterprise-grade deployment

- Explore our full connector library: Sources and Destinations

Related Articles From Estuary

FAQs

Can I use Logstash to connect MySQL to Elasticsearch?

How do I keep MySQL and Elasticsearch in sync in real time?

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.