After working for tech companies of all stages and sizes over the past 15 years, one thing has always been consistent: It’s only ever been easy to do what you want with data when a company is very small with low levels of complexity. Eventually requirements around governance, siloed teams, and scale lead to a state where a PhD in computer science and several days are required to set up relatively trivial insights, or worse yet, pull important business metrics (how long would it take to combine novel sales and product data at your company?). This is a common situation but one that can be avoided with a little planning and getting the right systems and conventions in place.

Step 1: Pick a place to put your data

Your goal is to make it easy for anyone in your organization to derive tomorrow’s insights from the business facts of today (or months ago) — insights that haven’t yet been contemplated. It’s impossible to know now what will be important in the future, so retaining historical business data in its original, unaggregated form is the best way to keep your options open. This is the overly socialized concept of a data lake. The only logical place to do this in modern computing is cloud storage since it provides cost-efficient storage, strong durability and essentially unlimited bandwidth for access.

Step 2: Querying and Governance

Just storing it isn’t enough. In order to actually provide real value, there needs to be a simple way to get the right insights to the right people without granting everyone in an organization the ability to see sensitive content, like recent offer letters from HR, customer PII, or financial details. A few key things to consider here are:

- How to organize data so that it can be discovered and leveraged quickly and efficiently.

- Determining where governance should be upheld. Should we restrict access to the data lake itself or govern the type of data that each role can pull from it?

In the above, (1) can be solved by technology, and there are several reasonably good options out there, the front runner usually being OLAP technology like Snowflake or Bigquery hooked up to Airflow / DBT. That will work for most companies, particularly for one-off or infrequently rebuilt insights. It becomes expensive and slow as an insight is relied on and updated again and again, and simply cannot satisfy emerging real-time needs.

The simplest thing to do for (2) is to grant only the minimum number of users required access to the actual data lake. The majority of people should only see materialized views which have a subset of dimensions that they care about and need to have access to. This makes for several benefits including speed and cost of queries, upholding governance, ensuring that users see what they actually care about, etc.

Step 3: Access

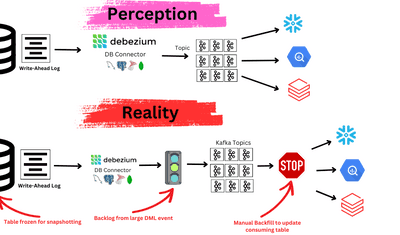

Most solutions to this problem will result in two tandem architectural patterns to solve the same problem. Engineering will have pipelines that are built to be reactive, event oriented and potentially real-time (maybe using Kafka), while business users will work off a separate source of truth that’s less frequently refreshed from a different source data set. Rather than maintaining separate pipelines, selecting an architecture which accomplishes both goals through one pipeline and one source of truth, greatly limits system surface area and makes for a better business solution.

To get it right, the key is access. Data should be accessible to any system that either a business or engineering team wants to use, whether it’s a query tool like snowflake, SaaS that a business unit wants to use such as Salesforce or a specialized engineering database like Aerospike. Those services need the option to have real-time updates so that workaround pipelines aren’t created when a real-time use case presents itself. A single data source should power all services and reports for the purposes of consistency, accuracy, ease of maintenance and reduction of complexity.

The Result

The resulting system should have a unified, real-time data lake that captures data from every part of the business. Users can create specific views of their data which are relevant to their line of business (materializations) that update continuously, as new business events occur, and provide the right level of access for any persona to meet governance requirements. The unified data lake powers all services, reports and SaaS with a single source of truth.

This is a paradigm that is incredibly difficult to achieve from an engineering perspective, but one we believe needs to be the foundation of any business.

At Estuary, we’re building the tech to make it easy to create and maintain this type of data lake and, with Estuary Flow, to enact continuously materialized views written in SQL for instant, real-time access to specific sets of data which fulfill each team or application’s use case.

__________

2023 Update:

A lot has changed (and a lot of tech decisions have been made) since we wrote this article... but our values haven't.

Most importantly, Estuary Flow's managed UI-based service is live. Sign up to use it free or learn more about Flow here!